It’s virtually certain that computers will replace humans in many situations.

The blockbuster movie “Titanic” had more than a casual meaning to University of Pennsylvania professor Norman Badler. As he watched the great ocean liner plunge, he saw hundreds of his grandchildren going down with the ship, tumbling hopelessly along the sloping decks into the icy grip of the North Atlantic.

The grandchildren were the digital “actors” used in the film’s Oscar-winning special effects. They are descendants of Badler’s baby, a landmark piece of software with the simple name Jack, developed at Penn nearly 15 years ago to make three-dimensional representations of human beings.

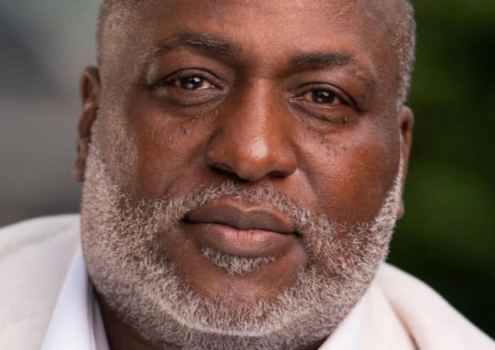

Badler is widely regarded as a pioneer in the field of making virtual humans, computer-generated characters that simulate, joint by joint, even muscle by muscle, the actions of their real-world counterparts.

Virtual humans such as Jack stand in for real people inside virtual environments. They conduct ergonomic tests on new products still in the design stage, saving businesses the time and expense of building physical prototypes. They join the fighting force in military simulator programs. Some have been used for medical and space applications.

And, thanks to improvements in computing power and motion-capture techniques, a new type of virtual human is emerging– characters that copy humans so precisely they are finding jobs in Hollywood.

“Jack was groundbreaking,” said Linda Jacobson, “virtual reality evangelist” for Silicon Graphics Inc., whose computers are widely used in virtual human modeling. The “Titanic” effects, created by Digital Domain Inc. using SoftImage software, represent a sophisticated evolution of Jack’s technology, she said.

Hollywood-enhanced versions notwithstanding, typical virtual humans look a lot like cartoon or video-game characters, though the similarity usually ends there.

Fred Flintstone really does seem prehistoric compared with DI-Guy, a rifle-toting virtual infantryman from Boston Dynamics Inc. designed to behave like a real soldier in military training simulations. DI stands for dismounted infantry.

Fred and his cartoon contemporaries are animated in advance, all their actions predetermined. DI-Guy, on the other hand, can stop marching, drop to the ground and begin a belly crawl on command. Tell him to walk to any place in his virtual environment and, courtesy of his computer programming, he knows automatically how he should bend his joints and move his muscles to get there with no need for additional animation. Tell him to get sick from being gassed, and he doubles over.

“Some of us like to consider virtual humans to be creations that exist in real time. We can interact with them. You can hold a conversation with them. They respond to our interactive requests,” said Badler, director of Penn’s Center for Human Modeling and Simulation, part of the School of Engineering and Applied Science.

Some video games offer behavior choices for the characters, but they don’t approach the level of interactivity of an intricately programmed virtual human, Badler said. “Those games don’t let you out of the box, as it were. You can’t have a synthetic soccer game and all of a sudden some of the guys decide to walk off the field and have pizza. When we and others build virtual humans we try to give those characters a wide range of general behaviors so they could do things out of the box.”

Virtual humans created through motion capture may soon migrate from Hollywood to the boardroom–or at least their faces may. It’s an idea Badler calls “virtual conferencing.” Rather than holding a video conference or satellite meeting to bring together remote participants, you create realistically modeled digital stand-ins, avatars, that convene by computer and use the exact body motions and voices of the real people.

“You see the virtual table and all the participants sit around that table in their virtual selves, and it appears to be a meeting,” Badler said.

Several research institutions are looking into the idea. At Bell Laboratories in Murray Hill, N.J., Eric Petajan has written programming that allows him to track a speaker’s facial movements using a camera linked to one computer, then render an animated version of the person’s face on a remote computer. The person receiving the transmission sees a character that duplicates the facial and head movements of the speaker.

Petajan says such a system can be used by call centers as a way of communicating with customers live over the Internet. “You can represent the company in form of a face consistently and make it look as attractive as you want, and it can be driven by any operator.”