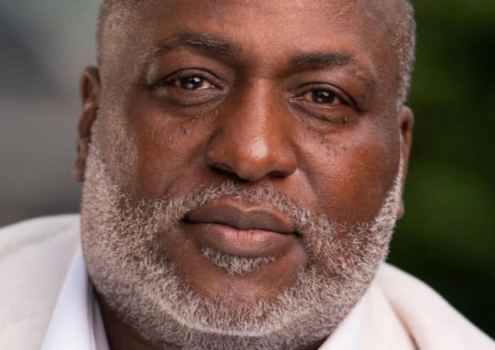

If the face is a window into the soul, then Javier Movellan has peered deeply into the human condition.

His research team has studied more than 100,000 faces, analyzing each one for the smallest shifts in facial muscles–a lexicon of emotional expression. A computer scans the faces 30 times a second and then squirrels away the information in a bulging databank.

Pausing to gather his thoughts, Movellan rubs his eyes and contemplates the face of the young woman on his computer screen. She seems cheerful, but her eyes squint slightly–a hint of vexation?

There is no quick way for Movellan to say, but somewhere in the trillions of bits of information stored in his computer, he is convinced, there is an answer.

For the last decade, the University of California San Diego psychologist has traveled a quixotic path in search of the next evolutionary leap in computer development: training machines to comprehend the human mystery of what we feel.

Movellan’s devices can identify hundreds of ways faces show joy, anger, sadness and other emotions. The computers, which operate by recognizing patterns learned from a multitude of images, eventually will be able to detect millions of expressions.

Scanning dozens of points on a face, the devices see everything, including what people may try to hide: an instant of confusion or a fleeting grimace that betrays a cheerful front.

Such computers are the beginnings of a radical movement known as “affective computing.” The goal is to reshape the notion of machine intelligence.

It finds inspiration in Hal, the eerily alluring supercomputer of “2001: A Space Odyssey,” which transcended mere computation with astute emotional skills and even a sense of duty. Compared with its impassive astronaut companions, Hal seemed the most human figure in the 1968 film.

Affective computing would transform machines from slaves chained to the limits of logic into thoughtful, observant collaborators. Such devices may never replicate human emotional experience, but if their developers are correct, even modest emotional talents would change machines from data-crunching savants into perceptive actors in society.

At stake are multibillion-dollar markets for electronic tutors, robots, advisers and even psychotherapy assistants. With other pioneers of this new realm, Movellan, a quiet, 41-year-old Spaniard, is turning the field of artificial intelligence, or AI, upside down.

Movellan is part of a growing network of scientists working to disprove long-held assumptions that computers are logical geniuses but emotional dunces.

The ability to interpret markers for emotion–facial expressions, vocal tones and metabolic responses such as blood pressure–may seem like crude first steps. Yet experts see machine intelligence, unswayed by human frailty and bias, as an eventual advantage. They envision machines that know us better than we know ourselves.

Some say that without the ability to experience emotions–far beyond today’s technology–perceptive machines would offer simplistic, unreliable readings of human feelings. Others recoil at the prospect, suggesting that if machines perceive, store and catalog people’s emotional responses, they would open a new assault on personal privacy.

But if scientists are right about the potential of today’s research, emotion machines would force a debate that could redefine intelligence, artificial or human, and shed new light on the core of humanness–what it means to feel.

“Modern AI is offering us [a] realization that … the essence of intelligence is in our capacity to perceive patterns, deal with uncertainty and operate successfully in the natural world,” Movellan said.

Affective computing updates an age-old fascination. In some versions of the ancient Jewish myth, the clay creature Golem gains human desires when a slip of paper inscribed with the name of God is placed in its mouth. Like Pinocchio and Frankenstein’s monster, Golem is a touchstone for the often frightful preoccupation with turning inanimate objects into sentient beings.

The word “robot,” which is Czech for “forced labor,” was coined in a 1920 stage play in which machines assume the drudgery of factory production, then develop feelings and turn against their makers. Hal in “2001” was programmed with intuition and empathy to keep astronauts company, only to become a murderer.

Scientists don’t foresee machines with Hal’s emotional skills soon, but they already have debunked AI orthodoxy considered sacrosanct only five years ago–that logic is the one path to machine intelligence.

For Terrence Sejnowski, director of the Institute for Neural Computation at UCSD and Movellan’s mentor, the pursuit of emotion machines began a decade ago when he viewed Sexnet, a program designed to distinguish male from female faces that had been stripped of cultural cues like hair and cosmetics. In a test against people, the computer proved the better judge.

Sejnowski began to imagine computers that see past faces to the emotions behind them. Now he and Movellan are helping to create a digital compendium of human emotion, “a catalog of how people react to the world.”

The basis of that catalog is a coding system developed in the 1970s by UC San Francisco psychologist Paul Ekman, who classified dozens of facial muscle movements into 44 discrete units. These “action units” define the meaning of raised eyebrows and furrowed brows. Experts in Ekman’s method recognize combinations of movements that correspond to dozens of variations on basic expressions like joy, surprise, anger, fear, sadness and disgust interpreted with remarkable consistency across cultures.

Movellan’s team videotapes subjects who show a range of emotions. The researchers feed the images into a computer, then use pattern-recognition software to train the computer to make Ekman assessments and to generalize from one person to the next.

Smiles and wrinkles are first steps. Researchers are adding body language, vocal tones, speech recognition and metabolic signals to give computers a richer mix from which to draw conclusions.

But understanding is a far different and more difficult process. “You don’t get emotions by manipulating 0s and 1s,” said John Searle, a UC Berkeley philosopher known for challenging the intellectual underpinnings of AI. “Simulation of digestion won’t digest pizza.”

Ronnie Stangler, chairwoman of the American Psychiatric Association’s technology committee, said top clinicians realize that “it’s the richness of our history, our personal experience and our relationships that make us … appreciate the emotional state in the larger context of a person’s life.”

But the ability of machines to quantify emotions provides a strong incentive for corporations or governments to capture the data. Stangler said the prospect opens a range of new privacy dangers. “Can you imagine those same credit bureaus that know the size of our mortgage and our credit card debt knowing also how anxious we are?”